Client

Areas

Technical overview

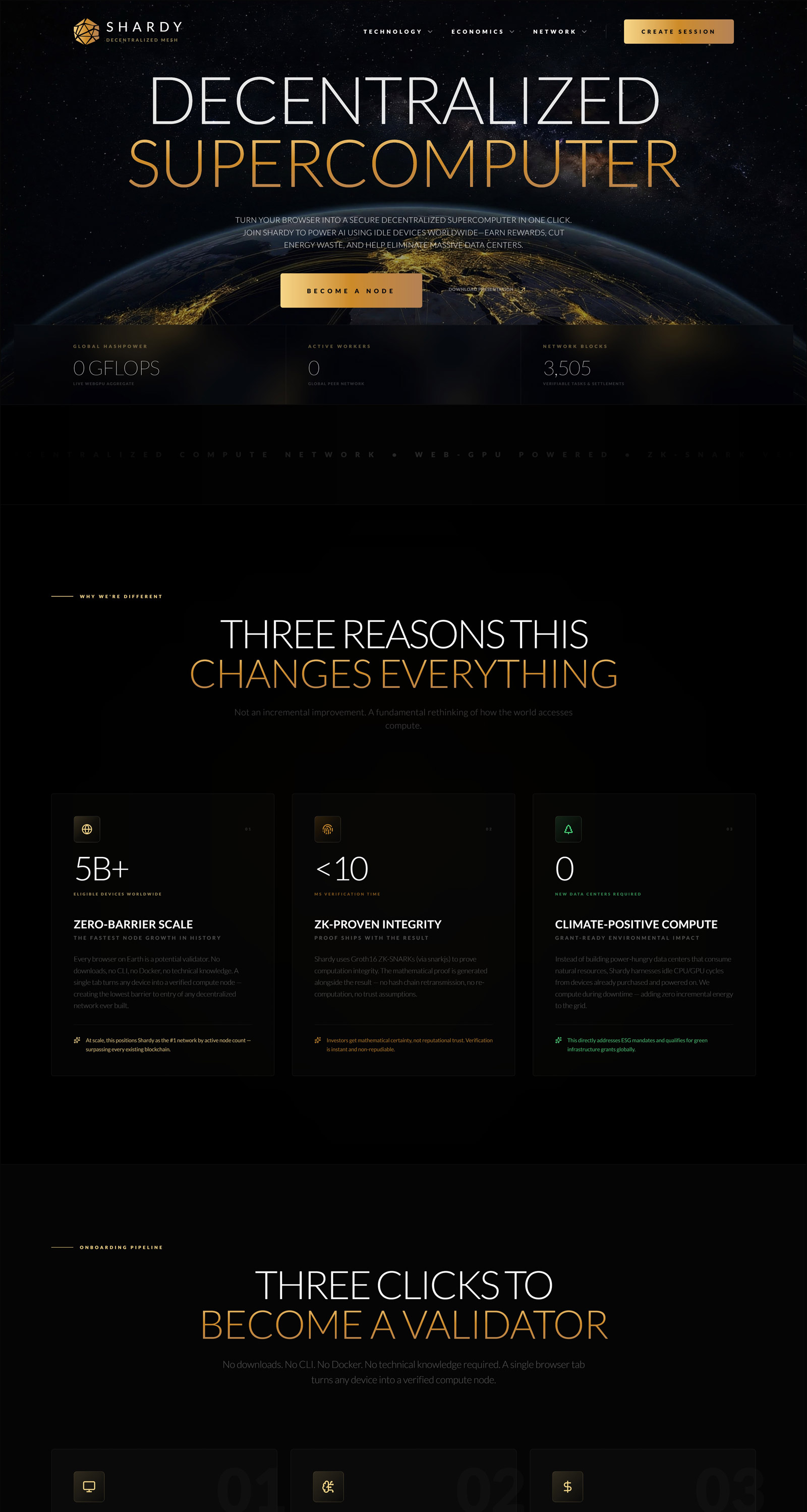

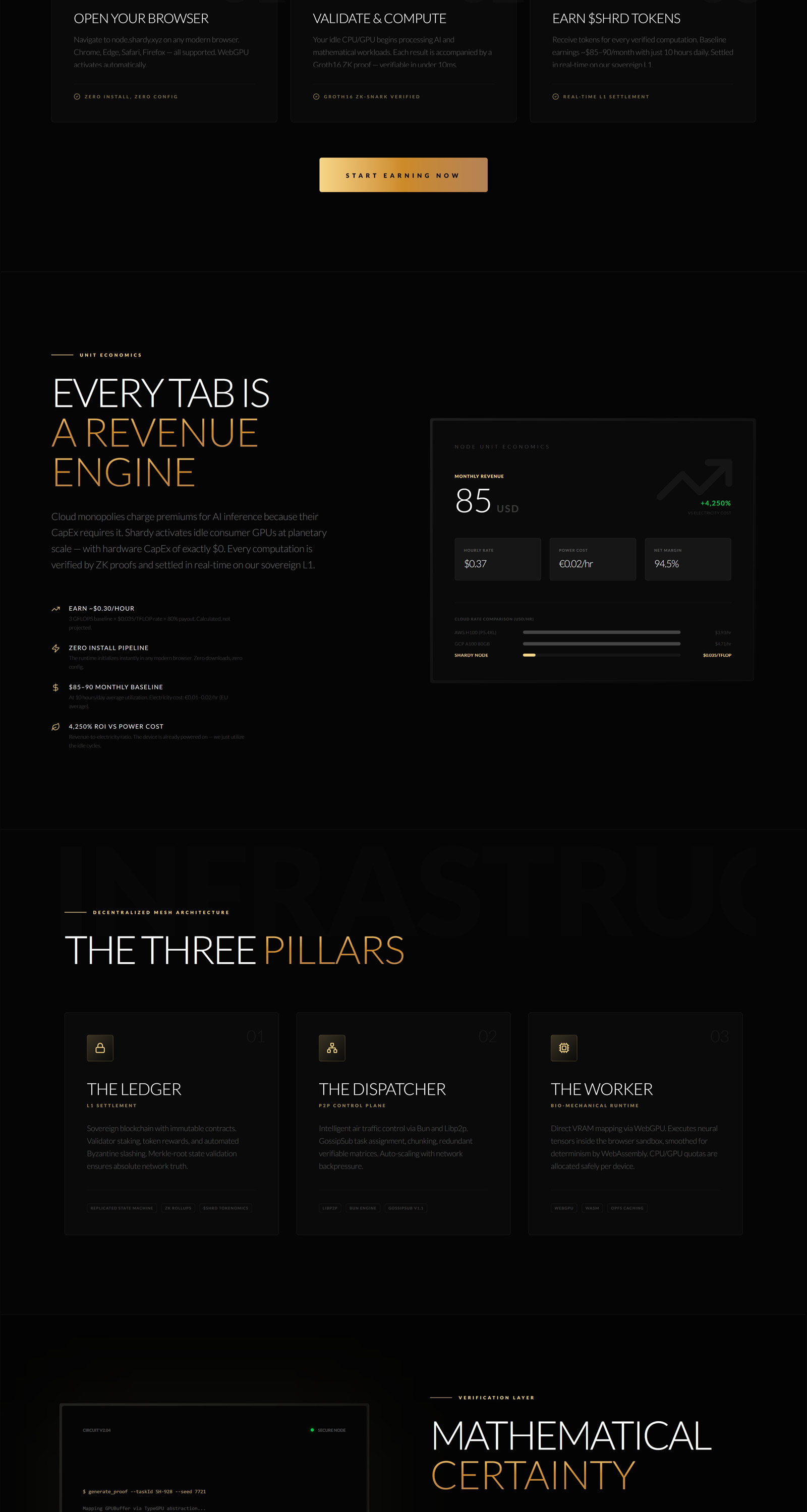

Shardy is a decentralized physical infrastructure network that turns heterogeneous browser and consumer devices into a massively parallel compute fabric. The platform couples a low-latency orchestrator implemented in Bun and SQLite with a libp2p gossip mesh, Rust-compiled WASM preprocessors, and WebGPU compute pipelines authored via TypeGPU to guarantee type-safe memory layouts and high throughput.

The control plane implements Byzantine Fault Tolerance through redundancy-based consensus, dynamic reassignment and a dead-letter queue. Tasks are dispatched as a JSON metadata frame followed by a binary payload; workers acknowledge, preprocess in WASM, dispatch WGSL shaders to the GPU, and produce a cryptographic Groth16 proof via SnarkJS. The orchestrator verifies proofs, enforces quorum, and gossips verified digests across the P2P mesh.

Key architectural elements:

- Dispatcher engine (Bun): lifecycle management, REDUNDANCY_FACTOR enforcement, exponential backoff retries, DLQ and progress telemetry.

- P2P backbone (libp2p): GossipSub for verified-state broadcasting, Kademlia DHT + mDNS discovery, Noise handshake and Yamux/Mplex multiplexing, and stream fallbacks.

- WASM runtime (Rust → WASM): deterministic binary slicing, alloc_bytes/dealloc_bytes memory management, EMA smoothing to normalize numeric results across GPU architectures, and a JS fallback.

- WebGPU pipeline: Type-safe WGSL via TypeGPU, dynamic GPUBuffer allocation, zero-copy transfers using SharedArrayBuffer, and OPFS scratch storage for large datasets.

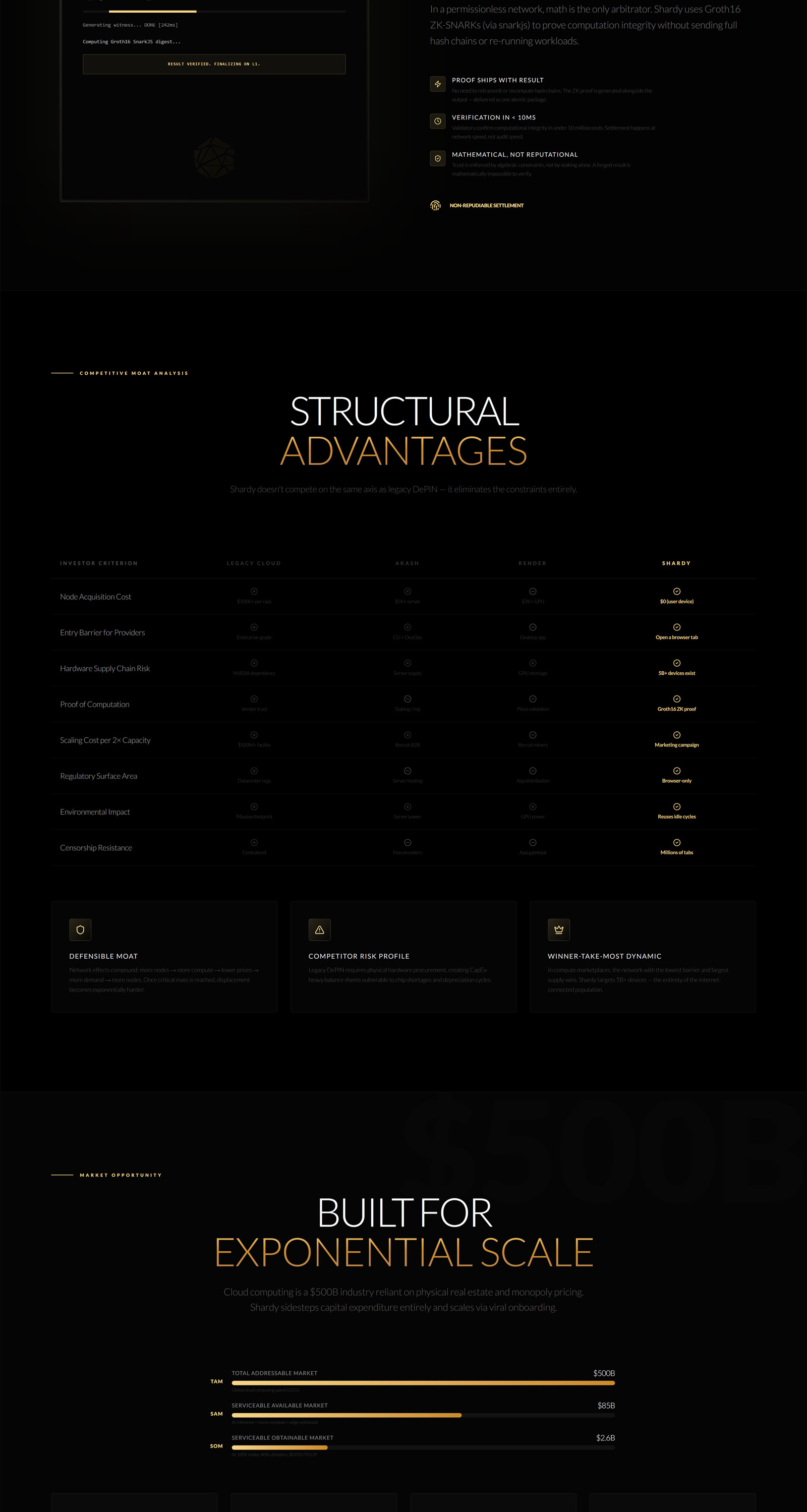

Shardy’s security and correctness hinge on ZK-SNARK integration. Workers compile Groth16 proofs locally using a compact Circom circuit that binds taskId, seed, outputLen and resultDigest into an enforceable constraint system. The orchestrator keeps a manifest of verifier versions to rotate keys without downtime, and a proof-replay guard in the SQLite schema prevents double-submission attacks.

Key engineering challenges included maintaining determinism across GPU vendor differences, minimizing browser-level memory copying, and providing resilient peer discovery in restrictive networks. Solutions implemented: EMA smoothing for numerical stability, zero-copy SharedArrayBuffer paths and OPFS buffering for IO heavy tasks, and multi-protocol discovery combining mDNS and Kademlia. The result is a permissionless, cost-efficient compute mesh that provides cryptographic correctness guarantees and real-world performance comparable to centralized clouds for many workloads.